Software Planning 101: Part 5/7

The Need for Different Execution Localities

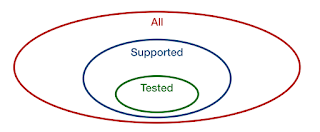

The software dimension execution locality describes on what processing unit, what type of instructions are running. Rather than defining absolute localities, it defines relative localities.

The Wish for A Single Locality

When leveraging only a single processing unit for your solution - e.g. a single logical core on a CPU - the installation and managing of the software is easy. A single binary would suffice. Also no extra effort to write code for communications and coordination between different processing units are needed. Latency is likely on an all time low for a single request (okay... if we ignore the potential parallel processing of a single request, something that likely makes the code so much more complex that it almost never benefits the solution.).Running your software on a single processing unit is least complex. Systems with more localities are extremely more complex. The destination service can be unavailable. There is no explicit control over the sources of a service. Coordination between services needs to happen. The latency increases because of extra communication and wait times on dependent services that are already processing another request. And the IT operations of a such a solution is much more complex.

IT Operations

When having multiple localities, most of the time IT Operations is affected by a more complex administration. Therefore a more detailed plan is required for:- distribution - how are the software packages made available to install?

- installation - how is each software package installed?

- configuration - how is the application, or each software package, configured?

- monitoring - how can we aggregate all the logs to monitor the complete solution?

- updates - how are updates to the software installed?

- recovery - how is the corrupted software restored to a working state?

- extensions - how are extensions, e.g. plugins, to the software installed?

The answers to those questions for a single locality are normally simple. There is only a single software package and only one host to install it to.

With multiple localities there are normally multiple different software packages that have different sets of hosts to be installed on. As example: the configuration of such a software package contains often: replicated settings on each host for the logic configuration; unique settings that are specific for that host (node); and some configuration settings are unique to the data set and thus are not found on the host.

The Need for Multiple Localities

Though it is clear that multiple localities is not a joy, if not done the drawbacks are the limitations around how performant, reliable or secure the solution is. For enterprise-grade solutions this is often not the right trade-off. Therefore we need to introduce multiple localities to help increase the performance, reliability and the security of the software.Performance

From performance aspect, the following aspects must be considered:- low latency - the difference in time between issuing and completing a request;

- high throughput - the amount of request that can be handled during a time unit by the software;

- low jitter - the difference in latencies of requests, with much jitter some requests are fast others are really slow;

- minimal error rate - almost no system never fails, this is the percentage of request that did not succeed successful.

Also the changes of the usage of software in the future must be accommodated. How to handle:

- the average time a user uses the software increases;

- the amount of request a user issues in the same time increases;

- the amount of users are increasing;

- the amount of data users are storing increases;

- the average size of a data item a user is storing decreases;

- the average size of a data item a user is storing increases.

Reliability

For reliability we must consider the different items that can make the system fail to work. Those risks are normally mitigated by redundancy.- physical - what happens if the power or cooling systems of a facility with computers fails?

- hardware - what happens if a disk dies, or a memory bank gets corrupted?

- networking - what happens if there is network congestion, IP hijacking, or switch failure?

- host - what happens if the host, virtual machine, OS or hypervisor dies?

- service - what happens if a service crashes?

- data - what happens if there is some inconsistent or corrupted data?

Security

Another aspect is [application] security. There are five scenarios how your application can be at risk:- malicious users

- stolen credentials

- exploiting existing bugs

- introduction of malware

- misconfigurations of software

Be aware of the potential impacts and see what risk level is acceptable. Many times the easy answer is: limiting. Although it is not a complete fix, limiting helps. The less configuration, the less that can be misconfigured. The less users, the less potential malicious users. The less software (including external), the less bugs that are present to be exploited. The less connectivity results in a harder way to introduce malware.

For example: limiting of connections is done by segmenting the network and thus software; limiting the amount of software on a host is done by introducing containers or virtual machines.

Extending the Locality

We now will look into the aspects of extending the locality of a software solution.Multiple Cores (a.k.a. Concurrent Programming)

Characteristics: When introducing separate cores, multiple cores and CPUs on the same host can be leveraged. The memory between cores is shared and thus no explicit data transfer is needed between cores.Benefits: This means overall performance is increased by leveraging more processing units.

Drawbacks: Synchronization is needed for two cases: (1) multiple cores can read and update the same memory at the same time without each other knowledges - thus corrupting the state of the software - and, (2) different cores have their own caches and outdated cached values need to be flushed.

Multiple Processes

Characteristics: Processes are introduced to partition the amount of code that can influence itself. Each process has its own instructions loaded and memory available.Benefits: Since a process has less code - and thus bugs - present, security increases as there are less bugs to exploit and reliability increases as less bugs can crash the process.

Drawbacks: Explicit communication can be written using shared disk, signaling, explicit shared memory, pipes or sockets. Some resources can only be claimed by a single process (e.g. server ports).

Multiple Hosts (a.k.a. Distributed Programming)

Characteristics: A host is an independent administered unit. It is connected to the computer network.Benefits: Performance increases beyond the scope of a single machine. Reliability increases as host level outages won't take down the complete system.

Drawbacks: Each host needs to have an unique IP address and hostname configured. Increased stress on the network.

Multiple Tiers (or Security Zones, Network Segments)

Characteristics: Hosts are grouped together and there is a congestion point between the groups.Benefits: Security policies can be enforced between at the congestion point.

Drawbacks: Performance is negatively affected by the extra hop.

Multiple Availability Zones

Characteristics: An availability zone has its own power, cooling, networking and administration timeframe. Availability zones have independent availability/uptime aspects.Benefits: Reliability increases because most outages are mitigated.

Drawbacks: Extra logic is needed for replication of data.

Multiple Regions (or Data Centers, Geographic Areas)

Characteristics: A region has its own regulations. Data and functionality can be controlled per region for conformance with regulations.Benefits: Improved security by more segregation in data and functionality.

Drawbacks: Extra logic needs to be implemented for federation. Performance is negatively affected by more network hops.

Comments

Post a Comment